Simon Glairy is a distinguished authority in the intersection of commercial insurance and Insurtech, currently at the forefront of analyzing how artificial intelligence reshapes enterprise risk. With extensive experience in AI-driven risk assessment, he has spent years advising global firms on how to navigate the shift from digital experimentation to full-scale operational integration. As organizations grapple with the “governance gap”—where the speed of AI adoption outpaces the implementation of safety protocols—Simon’s insights provide a vital roadmap for maintaining both profitability and professional integrity in an increasingly automated landscape.

In this discussion, we explore the transition of AI from a pilot phase to a core business driver, examining why a staggering 43% of firms still lack formal risk frameworks despite heavy investment in employee training. Simon breaks down the critical importance of preserving “human ingenuity” in complex underwriting and claims, the emerging legal threats to directors and officers, and the practical steps businesses must take to satisfy rigorous new international regulations like the EU AI Act.

Many organizations are seeing significant productivity gains by using AI to handle repetitive tasks like document triage. How are these tools specifically augmenting human workers rather than replacing them, and what metrics should leaders track to ensure this balance remains effective?

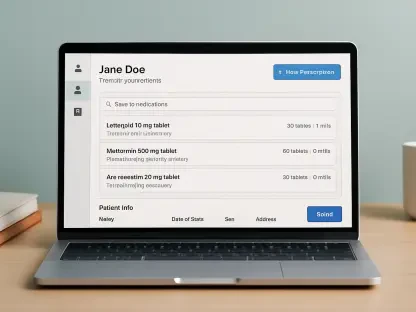

The current trend we are seeing is a shift from experimentation to enablement, where 86% of businesses report tangible productivity gains. Instead of replacing the professional, AI is acting as a “force multiplier” by absorbing the drudgery of document handling, triage, and underwriting workbenches. This allows a human underwriter to move away from manual data entry and focus on creative ideation and high-level client strategy. To ensure this balance is working, leaders should monitor “value-added time” metrics—specifically tracking whether the hours saved on routine analysis are being reinvested into solving complex risk engineering problems or deepening client relationships. If you see a 20% increase in document processing speed but no corresponding rise in the quality of complex decision-making, you aren’t augmenting; you are simply accelerating a bottleneck.

While investment in AI training and hiring is rising, many firms still operate without formal risk management frameworks. What are the immediate legal and operational dangers of this oversight gap, and what practical steps can a company take to build a robust governance structure?

The danger is palpable because, while 62% of firms have delivered AI training, 43% still lack a formal risk management framework. This oversight gap leaves companies wide open to “silent” exposures in Technology Errors & Omissions (E&O), cyber, and employment practices liability, particularly if an unmonitored algorithm introduces bias into hiring or credit decisions. An immediate operational danger is data leakage from training sets, which can lead to catastrophic reputational damage and regulatory fines. To bridge this, a company must first implement a centralized AI strategy—something only 56% of firms have currently communicated—and then mandate that every AI use case be mapped against existing internal controls. This structure must include a clear escalation path for algorithmic failures, ensuring that automated decisions can be explained to both a judge and a regulator.

Even with rapid technological adoption, human judgment remains critical for solving complex problems and maintaining client relationships. How can businesses protect the “human touch” in their operations, and what specific scenarios still require human creativity over algorithmic efficiency?

Protecting the “human touch” is not just a sentiment; it is a strategic differentiator, with 34% of businesses explicitly citing it as a reason for retaining staff roles. We find that scenarios involving nuanced negotiation, such as large-corporate claims or specialty commercial lines, require a level of empathy and creative problem-solving that a machine simply cannot replicate. Algorithms excel at pattern recognition in historical data, but they struggle with “black swan” events or complex risk scenarios where there is no precedent. To maintain this balance, firms should purposefully “air-gap” certain high-stakes client interactions, ensuring that while AI provides the data-driven foundation, the final handshake and the interpretation of complex risk engineering remain firmly in human hands. This ensures that trust—the bedrock of the insurance industry—remains intact even as the backend becomes more automated.

AI integration is creating new exposures in areas like professional liability and directors and officers insurance. What specific red flags should underwriters look for when assessing a company’s AI maturity, and how do algorithmic failures typically manifest in modern litigation?

Underwriters are now moving beyond general questions to look for specific red flags, such as a lack of board-level oversight on AI strategy or the absence of a dedicated AI impact assessment—a document only 44% of firms currently produce. In modern litigation, algorithmic failures often manifest as systemic biases in pricing or HR outcomes, which can quickly escalate into class-action lawsuits or regulatory scrutiny from bodies like the NAIC or OSFI. We also see “AI-assisted social engineering” where the technology is weaponized to bypass traditional cyber defenses, creating a nightmare for D&O coverage. To mitigate these risks, companies must provide rigorous documentation of their model validation processes and show clear evidence of “human-in-the-loop” protocols. If a company cannot explain how its model reached a specific financial decision, it is essentially uninsurable in the current high-stakes environment.

With new regulations like the EU AI Act emerging, impact assessments are becoming a necessity for high-risk applications. What does a comprehensive AI impact assessment look like in practice, and how should organizations document their model validation to satisfy international supervisors?

A comprehensive AI impact assessment is no longer a “nice-to-have” but a regulatory anchor, especially as the EU AI Act phases in stricter obligations for “high-risk” financial applications through 2026. In practice, this assessment involves a granular audit of the training data to test for inherent bias and a “stress test” of the model’s decision-making logic under various edge cases. Organizations must document the entire lifecycle of the model, from the data source to the final output, ensuring there is a clear “audit trail” that explains why certain decisions were made. This documentation should be formatted to satisfy international supervisors like Canada’s OSFI, focusing heavily on transparency and the ability to “roll back” the AI’s influence if it begins to drift from its intended purpose. It is about proving that you have control over the machine, rather than the machine having control over the business.

As AI moves from the testing phase into daily operations, the role of risk consultants and brokers is evolving. How should these advisors help clients map existing controls to new AI use cases, and what granular questions should be asked during the insurance renewal process?

Brokers are moving from being simple intermediaries to becoming essential risk architects who help clients close the governance gap. During the renewal process, the questions must become much more surgical: Which specific workflows are algorithm-driven? How do you manage third-party AI vendors? What is your protocol for handling an “algorithmic hallucination” that impacts a client’s portfolio? Advisors must help clients map their existing cyber and E&O controls onto these new AI use cases to ensure there are no “gray areas” where coverage might fail. This evolution means that the long-term value of a broker will be measured by their ability to provide practical guidance on AI policies and incident response, distinguishing the mature, well-governed risks from the chaotic ones.

What is your forecast for the future of AI risk management?

I forecast that the “governance gap” will reach a breaking point within the next 24 months, leading to a massive market correction where AI-specific risk frameworks become as mandatory as basic cybersecurity is today. We will see a shift where insurance premiums are directly tied to the maturity of a firm’s AI impact assessments; those who cannot prove their models are ethical and transparent will face prohibitive costs or total exclusion. Ultimately, the industry will realize that the highest ROI doesn’t come from the most powerful algorithm, but from the most seamless integration of technology and human judgment. The firms that survive and thrive will be those that treat AI not as a replacement for talent, but as a tool to elevate human ingenuity to a level we’ve never seen before.