Simon Glairy has spent his career at the intersection of insurance, risk management, and AI. He’s helped carriers, MGAs, and brokers translate emerging technology into disciplined operations that respect regulation and earn customer trust. In this conversation, he unpacks how agentic AI can move from cautious pilots to production without losing the “human business” soul of insurance. Expect pragmatic guardrails, field-tested workflows, and a governance-first lens that keeps value, explainability, and accountability front and center.

Agentic AI can act independently in tasks like risk assessment and claims. Where would you deploy it first, why that workflow, and what metrics would prove it out in 90 days?

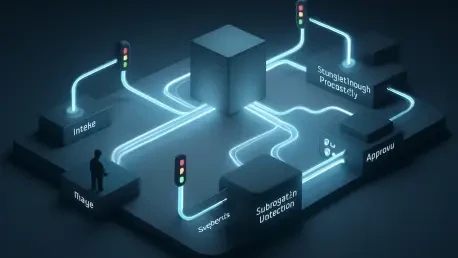

I’d start with subrogation opportunity detection and straight-through processing for low-severity claims. These are bounded, rules-heavy workflows where an agent can triage, gather evidence, and propose actions without touching the most sensitive customer moments. In the first 90 days, I’d track cycle time reduction, file-touch frequency, and leakage captured, alongside guardrail metrics like override rate and escalations. I want to see a measurable increase in straight-through rates, a visible drop in average handling time, and a stable or improved customer sentiment signal; if escalations remain within an agreed band and explainability holds up under spot audits, we’ve earned the right to expand.

Many insurers run tightly controlled pilots but hesitate to scale. What specific governance gates, KPIs, and redlines would move a pilot to production?

My gates are simple and strict: model risk review completed, data lineage documented end-to-end, and a working kill switch demonstrated in live-like conditions. KPIs must hit pre-agreed thresholds on accuracy, fairness checks, and operational impact, not just cost savings. Redlines include any unexplained drift, bias flags breaching tolerance, or customer detriment beyond the pilot’s contingency plan. If, over a defined evaluation window, we meet the KPI thresholds, pass audit sampling with clean explanations, and show stable behavior under load, we move—with tight scope and staged caps that gradually open as evidence accumulates.

Customer trust is fragile in regulated markets. How do you quantify reputational risk from agentic decisions, and what incident playbook would you activate within the first 24 hours?

I quantify reputational risk using a blended score: severity of impact on the customer, regulatory exposure, and amplification potential across channels. Each agentic decision type gets a preassigned risk tier with response timelines baked in. In the first 24 hours, my playbook triggers a cross-functional stand-up, freezes the implicated agent behaviors, issues transparent customer updates, and conducts an explainability capture so nothing is lost. We then push a rapid remediation path—manual review of affected cases, goodwill adjustments where warranted, and a post-mortem that produces both a customer-facing summary and an internal control upgrade.

Accountability, explainability, and operational risk are top concerns. What human-in-the-loop model, audit trails, and override mechanisms have you found workable at scale?

The workable pattern is tiered oversight: full automation for low-risk, explainable tasks; assisted mode for medium-risk decisions; and mandatory human sign-off for anything that materially affects coverage or payout. Every agent action writes an immutable event log with inputs, intermediate reasoning artifacts, and final recommendations. Overrides are frictionless—one click triggers both a decision reversal and a learning signal to the agent’s policy layer. At scale, this reduces operational risk because people are focused on exceptions, and auditors can replay the decision narrative without a forensic scavenger hunt.

In a “human business,” where must people stay firmly in charge, and where can agents take the lead? Share examples where this boundary improved outcomes or backfired.

People must own final decisions that shape customer promises—coverage determinations, claim denials, and complex complaints. Agents can lead in gathering evidence, validating completeness, and proposing consistent next steps. Where we held that boundary, adjusters had more time for empathy and negotiation, and customers felt seen even when outcomes weren’t perfect. Where it backfired was when an agent preempted a delicate outreach with a rigid message; we corrected that by forcing a human handoff for any case with emotional or financial hardship signals before communication goes out.

Executives want value beyond simple cost savings. What revenue, retention, or loss-ratio improvements should be in the business case, and how would you isolate AI’s contribution?

I’d frame the case around better risk selection, lower leakage, and higher service consistency that leads to retention lift. Concretely, target improvements in straight-through underwriting for the right risks and in recovery rates through smarter subrogation. To isolate AI’s contribution, run A/B cohorts with identical eligibility profiles, hold manual processes constant, and measure deltas in conversion, loss severity, and complaint rates. Attribution is strongest when you log decision rationales and can trace each uplift or reduction back to a specific agentic action rather than a blended program effect.

Data quality and consistency are recurring blockers. What data readiness checklist, lineage controls, and remediation steps are mandatory before enabling autonomous actions?

My checklist starts with schema stability, null handling policies, reference data stewardship, and documented feature provenance. Lineage controls must let you trace every model input back to its source system, including transformations and business rules. Before autonomy, run consistency tests across environments and time windows, and set up automated anomaly alerts that halt actions on detected drift. Remediation includes golden-source reconciliation, backfilling with auditable imputation strategies, and a formal sign-off that data contracts will not change without a change-control review.

Regulators expect clear responsibility and explainability. How do you design decision logs, model cards, and escalation paths that satisfy audits without crippling speed?

Decision logs should capture the minimum set of information that fully reconstructs a choice: sources consulted, constraints applied, alternatives weighed, and the final rationale. Model cards need plain-language purpose statements, limitations, data sources, and monitored fairness metrics, updated on a routine cadence. Escalation paths are pre-mapped to risk tiers so frontline teams don’t improvise under pressure; you get speedy handling because the lane is clear from minute one. By keeping these artifacts lightweight, standardized, and auto-generated, you avoid slowing the system while giving auditors exactly what they need.

Cross-industry collaboration spans insurers, MGAs, brokers, insurtechs, and tech partners. What operating model, shared standards, and IP boundaries enable joint build without gridlock?

Use a hub-and-spoke model: a shared governance hub sets standards, while spokes build domain components with clear interfaces. Adopt common data contracts and event schemas so agents can interoperate without constant translation. Protect IP by separating model weights and proprietary rules from shared protocols and evaluation harnesses. With that structure, you get speed from parallel development and confidence from a common compliance spine.

Operational efficiency is enticing, yet edge cases cause real harm. How do you triage cases for full automation, assisted handling, and mandatory human review?

Triage begins with a risk stratification score that blends policy complexity, claim severity, and context flags like vulnerability indicators. Cases below a defined threshold go to full automation with post-action sampling; the middle band gets agent recommendations with human confirmation; the top tier routes straight to expert handlers. We re-score continuously so a case can graduate or downgrade as new facts arrive. This dynamic gating prevents silent failure on edge cases and focuses expertise where it matters most.

Leaders fear “unknown unknowns” in live environments. What chaos-testing, sandboxing, and kill-switch strategies have uncovered hidden risks before customers felt them?

I run chaos drills that inject corrupted documents, contradictory evidence, and policy edge conditions into a safe but production-like sandbox. We’re watching for brittle assumptions—does the agent ask for help, or does it plow ahead? The kill switch is tested under load with timed drills so we know we can halt a misbehaving behavior family in seconds, not hours. These practices surface hidden coupling, like a data dependency that seemed benign until an upstream refresh changed a field definition overnight.

Strategic alignment is essential. How do you tie agentic AI goals to underwriting appetite, claims philosophy, and distribution strategy, and who owns trade-off decisions?

I map every agent objective to a declared business policy: risk appetite guardrails, fairness thresholds, and service promises. Underwriting defines the edges, claims sets the empathy and leakage balance, and distribution articulates service-level expectations with partners. A cross-functional risk committee owns trade-offs, with a clear decision cadence and pre-agreed arbitration rules. That way, when the agent faces a gray area, the system already knows which principle to prioritize.

Culture and skills can make or break adoption. What roles, training, and incentives turn skeptical teams into safe, capable AI operators within six months?

Stand up roles like AI product owner, model risk steward, and frontline AI operator, with clear handoffs. Training blends hands-on labs, scenario-based drills, and micro-learnings tied to real cases so skills compound week by week. Incentives should reward safe escalations, clean documentation, and customer outcomes—not just throughput. In six months, you want muscle memory: people know when to lean on the agent, when to override, and how to capture lessons that feed the next iteration.

What is your forecast for agentic AI in insurance over the next three years, and what milestones, adoption metrics, and regulatory shifts will define success?

Over the next three years, agentic AI will move from internal pilots to carefully bounded production, with clear evidence of improved service consistency and reduced leakage. Success will look like stable straight-through processing rates in selected workflows, explainability artifacts that withstand audits, and cross-industry standards that make partnerships smoother. Adoption metrics will include a rising share of assisted decisions that free humans for high-empathy tasks, alongside steady or improved customer sentiment. For readers, the signal to watch is simple: when you see transparent model documentation, disciplined escalation paths, and humans still owning the hardest calls, you’ll know the industry has turned hype into durable, human-centered progress.