As the global insurance landscape pivots toward an AI-driven future, the UK market is carving out a distinct, pragmatic path characterized by deliberate execution over rapid disruption. Simon Glairy, a recognized authority in risk management and InsurTech, joins us to discuss how British insurers are navigating the complex intersection of innovation, stringent regulatory frameworks like Consumer Duty, and the operational realities of scaling intelligent systems.

In this conversation, we explore the shift from isolated AI experiments to integrated decisioning environments and the unique challenges of data governance in a highly controlled market. We discuss the strategic move toward third-party data integration, the persistent gap between back-office efficiency and customer personalization, and the evolving role of explainability in automated pricing models.

Many insurers are targeting specific use cases like quote generation rather than pursuing end-to-end AI overhauls. How does this incremental strategy affect long-term internal confidence, and what specific steps can leadership take to prevent these isolated successes from becoming permanent innovation silos?

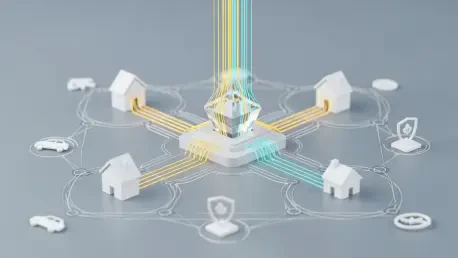

The UK’s incremental approach is actually a sign of maturity, as it allows firms to prove value in high-impact areas like quote generation without the catastrophic risks of a “big bang” failure. This strategy builds internal confidence by delivering immediate wins, but the danger is that these successes become trapped within specific departments, creating a fragmented landscape where AI works in pockets but not across the enterprise. To prevent these silos, leadership must shift from a project-based mindset to a platform-based philosophy, ensuring that the underlying architecture is interoperable from day one. By prioritizing a unified decisioning environment, executives can ensure that an insight gained in underwriting can be instantly utilized by the pricing or claims teams, effectively turning isolated successes into a cohesive competitive advantage.

Generative AI is currently delivering value by processing unstructured data and supporting segmentation. Beyond these immediate efficiency gains, what are the primary governance hurdles when scaling these tools, and how do you ensure that automated outputs remain fully explainable to regulators?

Scaling Generative AI brings the massive challenge of maintaining “explainability” in a world of “black box” logic, particularly under the watchful eye of UK regulators. The primary hurdle is moving beyond simple workflow support to actual decision-making while staying aligned with frameworks like Consumer Duty, which demand that every customer outcome be justified. We ensure this by implementing a “human-in-the-loop” oversight model where AI-generated outputs are subjected to rigorous, automated testing against pre-defined fairness metrics before they ever reach a consumer. It is about creating a paper trail for every model iteration, ensuring that if a regulator asks why a specific segment was priced a certain way, the insurer can point to clear, structured data points rather than an opaque algorithmic suggestion.

While structured governance frameworks are common, many industry leaders feel their current oversight is not keeping pace with technological change. What specific metrics indicate that a framework has become too rigid, and how can firms maintain compliance without sacrificing the speed of development?

A framework has become too rigid when the “time-to-market” for a simple model update begins to stretch into months, or when teams report that more than 50% of their development cycle is spent on manual compliance documentation rather than actual engineering. Another key indicator is a high rate of “abandoned innovation,” where promising AI pilots are shelved because the internal audit process is too cumbersome to navigate for non-standard use cases. To maintain speed, firms must embed governance directly into the DevOps pipeline, utilizing automated compliance checks that flag issues in real-time during the coding phase. This moves oversight from being a final “gatekeeper” to a continuous, background process, allowing for both the agility of a tech firm and the security of a regulated institution.

Insurers are increasingly turning to third-party data to supplement incomplete or outdated internal records. What is the step-by-step operational process for integrating these external streams into real-time decisioning, and where does this data flow most frequently encounter bottlenecks?

The operational process starts with an ingestion layer that cleans and standardizes disparate external data, followed by a real-time matching engine that connects this third-party insight to a specific policyholder record. Once matched, the data is pushed through a transformation layer where it is converted into features that an AI model can interpret for immediate pricing or underwriting decisions. The most common bottleneck occurs at the “integration point” between the modern data stream and the legacy core system, where old infrastructure often struggles to process high-velocity external data in milliseconds. When these streams hit a 20-year-old policy administration system, the resulting latency can cause quote abandonment, making it essential to use a middle-tier platform that can handle the heavy lifting of real-time decisioning outside the legacy core.

Operational efficiency in claims and administration currently outpaces the development of personalized, customer-facing capabilities. Why is tailored, real-time engagement proving more difficult to scale than back-office automation, and what specific technical capabilities are missing to bridge this gap?

Back-office automation is “inward-facing” and focuses on predictable, structured tasks like document processing, which are significantly easier to control and measure. Personalization, conversely, requires “outward-facing” AI that must react to unpredictable human behavior in real-time while remaining compliant with strict non-discrimination laws. The missing technical capability is often a “unified customer view” that updates dynamically; many UK insurers still have customer data trapped in different silos for motor, home, and life insurance. To bridge this, firms need a real-time orchestration engine that can take an insight—such as a change in a customer’s risk profile—and instantly translate that into a personalized offer or communication across all digital touchpoints.

The next phase of transformation involves connecting AI models directly to active decisioning environments. How does moving toward a unified environment change the daily workflow for a pricing team, and what are the tangible signs that an organization has successfully moved from experimentation to scale?

Moving to a unified environment transforms the pricing team from “data gatherers” into “strategy architects,” as they no longer spend their days manually moving CSV files between disconnected modeling and execution tools. In this new workflow, a pricing analyst can build a model, simulate its impact on the entire portfolio, and deploy it to the live market within a single afternoon, rather than waiting weeks for an IT deployment window. The tangible sign of success is when the organization moves from “static” pricing to “dynamic” pricing, where model updates occur weekly or even daily based on real-world performance. You know you have reached scale when AI is no longer a “special project” talked about in boardrooms, but is instead the invisible engine driving every single quote generated by the company.

What is your forecast for the evolution of UK insurance pricing over the next five years?

Over the next five years, I expect the UK market to move away from the current fragmented model toward a state of “Hyper-Personalized Compliance,” where pricing is both more tailored to the individual and more transparent than ever before. We will see a massive shift where the “Consumer Duty” mandate becomes a catalyst for innovation rather than a hurdle, forcing insurers to use AI to prove they are providing fair value to every specific micro-segment. The winners will be those who can balance this “deliberate” British approach with the technical ability to execute in real-time, effectively ending the era of the generic annual policy in favor of modular, usage-based insurance. Ultimately, the UK will prove that a highly regulated, cautious market can still be a global leader in AI, provided that trust and explainability remain at the core of the technological surge.