Simon Glairy is a seasoned authority in the evolution of Insurtech and systemic risk management, having spent decades observing the transition from isolated legacy systems to the hyper-connected digital landscape we navigate today. His expertise lies in identifying the invisible threads that bind global markets together, particularly where a single point of failure can lead to a cascade of financial losses. As insurers grapple with the shift from localized hardware to massive cloud ecosystems, Simon provides a critical perspective on how the industry must adapt to risks that are no longer theoretical but are actively reshaping the underwriting agenda.

In this conversation, we explore the paradox of modern digital security: while migrating to the cloud has bolstered baseline defenses for many companies, it has simultaneously created massive concentrations of risk within a handful of providers. We discuss the “hidden” vulnerabilities found in niche software-as-a-service providers—exemplified by the disruptive CDK incident—and why the industry struggles to find a unified approach to aggregation modeling. Simon also sheds light on the “silent” aggregation occurring across multiple insurance lines, the evolving role of artificial intelligence as a human-centric risk, and the existential threat that automated “drive-by” attacks pose to small and medium-sized enterprises that still believe they are under the radar.

Moving from on-premises infrastructure to cloud-based systems has streamlined security updates but concentrated exposure among a few major providers. How are insurers currently differentiating between improved baseline resilience and the systemic danger of a single-point failure? What specific metrics help quantify this concentrated dependency?

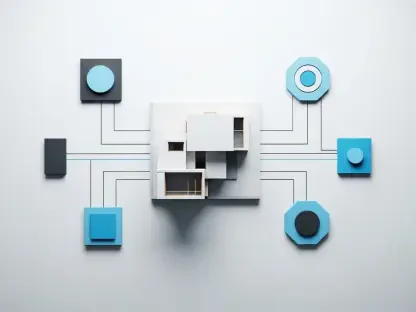

The shift away from on-premises infrastructure was initially seen as a triumphant win for risk quality because it allowed businesses to escape the burden of maintaining dusty, outdated server rooms. Companies no longer had to spend millions of dollars rebuilding IT infrastructure every few years; instead, they could rely on a few major providers to keep their security postures current and patched. However, this convenience has created a dangerous bottleneck where the resilience of thousands of individual firms is now tethered to the uptime of just a handful of cloud giants. Underwriters are now forced to look past individual firewalls and ask what happens if the “trunk” of the tree snaps rather than just a single branch. We quantify this by looking at “market share of dependency,” trying to map how many policyholders in a single portfolio rely on the same primary infrastructure or service provider. It is a stressful balancing act because while the frequency of small, localized losses has decreased due to better cloud security, the potential for a single, catastrophic “black swan” event has grown exponentially.

Obscure software-as-a-service providers can create massive ripple effects across specific sectors, as seen in recent disruptions within the automotive industry. How do you identify these “hidden” tier-two or tier-three dependencies during the underwriting process? What steps can be taken to map these vulnerabilities before an incident occurs?

The CDK incident was a massive wake-up call for the entire industry because it proved that a systemic loss doesn’t need to involve a household name to be devastating. Before that event, very few people outside the automotive sector had even heard of that specific software-as-a-service provider, yet its disruption paralyzed car dealerships across the United States. To identify these hidden threats, we have to move our gaze beyond the tier-one providers and start investigating the specialized software that acts as the “connective tissue” for specific industries. This requires a much more granular level of questioning during the underwriting process, where we ask clients not just about their cloud provider, but about the mission-critical niche tools they use for daily operations. We are essentially trying to create a “digital bill of materials” for each sector to see where these dependencies overlap quietly in the background. It is an exhaustive mapping exercise, but without it, we are essentially flying blind into the next sector-wide outage.

Cyber aggregation modeling is often described as a mix of science and art due to the lack of consistent industry standards. Why do views on accumulation vary so widely among underwriters today? What specific data points or qualitative judgments are necessary to bridge the gap where current modeling tools fall short?

If you put five different underwriters in a room and ask them how they view accumulation, you will likely get five very different, often conflicting, answers. This lack of consistency exists because while we have sophisticated tools to model risk, there is only so far the “science” can go before it hits a wall of unpredictability. We have the data on who uses which cloud, but the “art” comes in when we try to predict the human element—how a hacker might pivot through a network or how quickly a provider can realistically restore services. To bridge this gap, we need more than just technical data points; we need qualitative insights into a company’s incident response culture and their ability to pivot to manual processes when digital systems fail. Current tools often fail to capture the “logic” of an attack, so underwriters must use their intuition to stress-test scenarios that the software hasn’t even considered yet.

Cyber incidents frequently bleed into other lines of business, such as technology E&O and professional liability. How do you manage this “silent” or unintended aggregation in portfolios that are not dedicated to cyber? What protocols should insurers implement to isolate these exposures when a single event triggers multiple policies?

The reality is that a single cyber event can bleed across different lines of insurance with terrifying ease, creating a massive headache for claims departments. When a system goes down, it doesn’t just trigger a cyber policy; it can lead to professional liability claims if services aren’t rendered or technology E&O claims if a software failure causes financial loss for a third party. This “silent” aggregation is one of the most difficult risks to manage because it often sits in portfolios that haven’t been scrutinized with the same rigor as dedicated cyber books. Insurers need to implement strict cross-departmental protocols where every policy is reviewed for its “cyber-sensitivity” to ensure we aren’t doubling or tripling our exposure to the same event. We have to break down the silos between underwriting teams so that a professional liability underwriter knows exactly how a tech failure might impact their specific loss ratio.

Artificial intelligence is often viewed as an evolution of existing IT risks rather than a completely new category, yet it still requires human oversight. How is underwriting changing to account for “human-in-the-loop” failures or AI-driven errors? What specific operational safeguards should companies demonstrate to satisfy modern underwriting criteria?

AI is essentially a different “flavor” of an exposure that has existed for as long as information technology itself, but its speed and scale make it unique. The danger isn’t just the AI making a mistake; it’s the human on the other end who stops checking the work because they have developed a false sense of security in the machine’s output. Underwriting is now shifting to focus heavily on “human-in-the-loop” protocols, where we require companies to demonstrate that they have rigorous fact-checking and oversight mechanisms in place. We want to see that there is a person responsible for auditing AI-generated decisions and that the company isn’t just letting the algorithm run on autopilot. To satisfy modern criteria, businesses must show they have operational safeguards that treat AI as a tool that needs constant supervision rather than a replacement for human judgment.

Many small and mid-sized enterprises assume they are too small to be targeted, yet they often face existential threats from automated “drive-by” phishing attacks. How can the insurance industry better communicate the severity of recovery costs to these business owners? What step-by-step security postures are now considered non-negotiable?

There is a pervasive and dangerous misconception among SMEs that “no one really cares about us,” but they fail to realize that hackers aren’t always targeting them specifically; they are just casting a very wide, automated net. These “drive-by” phishing attacks are opportunistic and hit anyone with a weak defense, often leading to a recovery process that is far from a “five-minute fix.” We have to communicate to these owners that a breach isn’t just a minor inconvenience that costs a couple of hundred pounds; it is an existential threat that can bankrupt a small business through lost revenue and forensic costs. Non-negotiable security postures now include multi-factor authentication, regular employee training to spot phishing, and a verified backup system that is disconnected from the main network. If a small business can’t demonstrate these basic layers of defense, they are increasingly becoming uninsurable in the modern market.

What is your forecast for cyber aggregation risk?

My forecast is that cyber aggregation risk will become the defining challenge for the insurance industry over the next decade as digital dependencies deepen and move further into the “unseen” layers of the supply chain. We are moving toward a future where the distinction between a “tech company” and a “traditional company” disappears entirely, meaning every single policy in an insurer’s portfolio will have some level of digital tail-risk. I expect to see a much more aggressive push for transparency from software providers, as insurers will eventually refuse to cover companies that cannot provide a clear map of their third-party and fourth-party dependencies. While our modeling will get better, the “art” of underwriting will remain essential because the speed of technological change will always outpace the historical data we rely on. Ultimately, the winners in this space will be the insurers who stop viewing cyber as a standalone silo and start treating it as the systemic heartbeat of the entire global economy.